LDoLL Queuing

A comparison between LDoLL and FIFO The first comparison was made between FIFO and LDoLL. Using FIFO as a reference (the bare minimum), the performance of LDoLL can be estimated. It was known that in the case of Poisson distributed traffic, LDoLL would deliver the lowest possible delay for low delay traffic, and the lowest possible loss for low loss traffic.

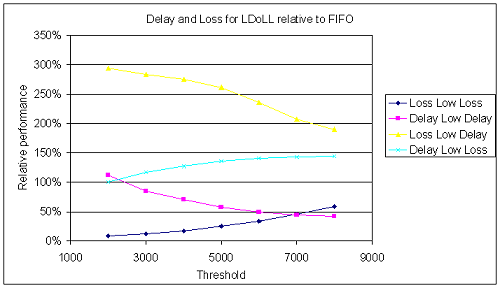

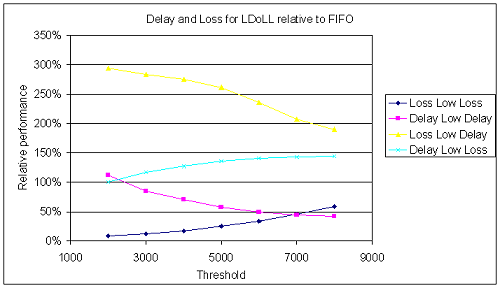

The first graph shown below illustrates the effect of the threshold setting on the loss and delay characteristics.

Figure 1. The loss and delay effects of the threshold used by LDoLL. The characteristics of FIFO Queuing are also shown. Of course, the threshold setting of LDoLL does not influence FIFO. The variation in the delay and loss is caused by the randomness always present in simulations.

This graph clearly shows that the delay in a LDoLL queue can be significantly lower than in a FIFO queue. Therefore, we can say LDoLL "works". Further simulation however showed that the effect of LDoLL queuing decreases when the buffer size is increased.

However, the delay is still a function of the load, and will increase when the load increases. Although the delay is significantly lower, guarantees cannot be given.

In the case of the loss of Low Loss traffic, the same effect is visible. Using LDoLL queuing results in a lower loss for Low Loss traffic than FIFO. But again, the loss still increases with an increasing load, and again, no guarantees can be given.

An important fact however is that although low delay traffic suffers a lower delay with LDoLL queuing, it does suffer a higher loss. Complementary, the low loss traffic suffers less loss than it would in a FIFO Queue, but it does get a higher delay.

We can call LDoLL an exchange mechanism. Traffic can get a better (lower) delay at the cost of a higher loss rate, or a lower loss rate at the cost of a longer delay. As Stavrov already concluded: LDoLL can service two classes of traffic: Traffic that requires a low delay but can tolerate loss, and traffic that requires a low loss, but can tolerate delay. Two other types of traffic, namely traffic that requires both low loss and low delay, and traffic that can tolerate both loss and delay, have to be handled in a different way, or one has to increase the threshold level to an extremely high level.

As already visible in figure XXX, that will reduce both the delay and the loss of the Low Delay traffic. Due to the high threshold level, Low Loss will only receive service when there are almost no Low Delay packets waiting. However, this might seem a little bit counterproductive since Low Loss will keep storage priority over Low Delay. The other option is to reduce the threshold to zero, which means that Low Loss gains absolute priority.

Voice traffic requires a low delay, but it cannot tolerate a high loss rate. However, increasing the threshold level might improve the delay suffered, a certain amount of loss will always be present. Also, the delay will always increase when the load increases.

To improve the delay characteristics of the low delay traffic, and also to guarantee some maximum delay figure, a small adaptation can be made to the LDoLL mechanism. Instead of sending low delay packets when it's their turn, they will be dropped after a certain time. This can potentially increase the loss of the low delay packets, but it will also guarantee that the delay of the arriving packets is below a certain limit.

This can also increase the performance of the low loss traffic, because the effective load is reduced. Simulation shows that it is indeed possible to limit the delay in this way. However, under loads sufficiently high to gain a serious reduction in delay the loss rate will rise to as much as 40%. The performance of the low loss traffic remains relatively unaltered: losses will decrease a few percent. Therefore, dropping packets that are too late is not really an option to improve a one buffer system. When considering a complete network one might still be tempted to drop low delay traffic earlier, because that can reduce the load downstream, thereby improving the performance downstream.

Another possibility is to limit the amount of buffer space that might be used for LD packets.

Simulation showed that this too reduces LD delay, but does also increase the loss rate. However, it might be easier to implement the last strategy in hardware, since time-stamping is no longer needed.

Quick Jumps

Copyright © 2000 Philips Business Communications, Hilversum, The Netherlands.

See legal statement for details